by David | Mar 4, 2024 | Azure, Information Technology, PowerShell

Reading Time: 4 minutes

Recently I was working with a company that gave me a really locked down account. I wasn’t use to this as I have always had some level of read only access in each system. I was unable to create a graph API application either. So, I was limited to just my account. This was a great time to use the newer command lines for graph Api as when you connect to Graph API using the PowerShell module, you inherit the access your account has. So today we will Get Intune Devices with PowerShell and Graph API.

The Script

Function Get-IntuneComputer {

[cmdletbinding()]

param (

[string[]]$Username,

[switch]$Disconnect

)

begin {

#Connects to Graph API

#Installs the Module

if ($null -eq (Get-Module Microsoft.Graph.Intune)) {Install-module Microsoft.Graph.Intune}

#Imports module

Import-Module Microsoft.Graph.Intune

#Tests current Connection with a known computer

$test = Get-IntuneManagedDevice -Filter "deviceName eq 'AComputer'"

#If the test is empty, then we connect

if ($null -eq $test) {Connect-MSGraph}

}

process {

#Checks to see if the username flag was used

if ($PSBoundParameters.ContainsKey('Username')) {

#if it was used, then we go through each username get information

$ReturnInfo = foreach ($User in $Username) {

Get-IntuneManagedDevice -Filter "userPrincipalName eq '$User'" | select-object deviceName,lastSyncDateTime,manufacturer,model,isEncrypted,complianceState

}

} else {

#Grabs all of the devices and simple common information.

$ReturnInfo = Get-IntuneManagedDevice | Get-MSGraphAllPages | select-object deviceName,lastSyncDateTime,manufacturer,model,isEncrypted,complianceState,userDisplayName,userPrincipalName

}

}

end {

#Returns the information

$ReturnInfo

#Disconnects if we want it.

if ($Disconnect) {Disconnect-MgGraph}

}

}

The Breakdown

Parameters

We enter the script with the common parameters. Command let binding flag. This gives us additional parameters like verbose. Next, we have a list of strings called Username. We are using a list of strings to allow us to have multiple inputs. Doing this, we should be able to use a list of usernames and get their Intune Device information. Note that this is a multiple input parameter, thus, you will need to deal with it with a loop later. Next is the Disconnect switch. It’s either true or not. By default, this script will keep connected to Intune.

Connecting to Intune

Next we will connect to the Intune system. First, we need to check and install the module. We check the install by using the get-module command. We are looking for the Microsoft.Graph.Intune module. If it doesn’t exist, we want to install it.

if ($null -eq (Get-Module Microsoft.Graph.Intune)) {Install-module Microsoft.Graph.Intune}

If the module does exist, we will simply skip the install and move to the import. We will be using the importing the same module

Import-Module Microsoft.Graph.Intune

Afterwards, We want to test the connection to Microsoft Intune. The best way to do this is to test a command. You can do it however you want. I am testing against a device that is in Intune.

$test = Get-IntuneManagedDevice -Filter "deviceName eq 'AComputer'"

We will be using this command later. Notice the filter. We are filter the deviceName here. Replace the ‘AComputer’ with whatever you want. If you want to use another command, feel free. This was the fastest command that tested. The above command will produce a null response if you are not connect. Thus, we can test, $test with an if statement. If it comes back with information, we are good, but if it is null, we tell it to connect.

if ($null -eq $test) {Connect-MSGraph}

Get Intune Devices with PowerShell

Now it’s time to Get Intune Devices with PowerShell. The first thing we check to see is if we used a username parameter. We didn’t make this parameter mandatory to give the script flexibility. Now, we need to code for said flexibility. If the command contained the Username flag, we want to honor that usage. We do this with the PowerShell Bound Parameters. The PowerShell Bound Parameters is the – that come after the command. We are looking to see if it contains a key of username. If it does, we want to grab the needed information with the username. While if it doesn’t, we grab everything.

if ($PSBoundParameters.ContainsKey('Username')) {

#Grab based on username

} else {

#get every computer

}

As we spoke about the list of string parameter needing a loop, this is where we are going to do that. We first create a foreach loop of users for the username. Here, the we will dump the gathered information into a Return variable of $ReturnInfo. Inside our loop, we gather the requried information. The Get-IntuneManagedDevice command filter will need to use the userPrincipalName. These filters are string filters and not object filters. Thus, the term like will cause issues. This is why we are using the equal term.

Now, if we are not searching the Username, we want to grab all the devices on the network. This way if you run the command without any flags, you will get information. Here, we use the Get-IntuneManagedDevice followed by the Get-MSGraphAllPages to capture all the pages in question.

if ($PSBoundParameters.ContainsKey('Username')) {

$ReturnInfo = foreach ($User in $Username) {

Get-IntuneManagedDevice -Filter "userPrincipalName eq '$User'"

}

} else {

$ReturnInfo = Get-IntuneManagedDevice | Get-MSGraphAllPages

}

Ending the Script

Now it’s time to end the script. We want to return the information gathered. I want to know some basic information. The commands presented produces a large amount of data. In this case we will be selecting the following:

- DeviceName

- LastSyncDateTime

- Manufacturer

- Model

- isEncrypted

- ComplianceState

- UserDisplayName

- UserPrincipalName

$ReturnInfo | select-object deviceName,lastSyncDateTime,manufacturer,model,isEncrypted,complianceState,userDisplayName,userPrincipalName

Finally, we test to see if we wanted to disconnect. A simple if statement does this. If we choose to disconnect we run the Disconnect-MgGraph command.

if ($Disconnect) {Disconnect-MgGraph}

What can we learn as a person

In PowerShell, we can stream line the output that we get. Often times commands like these produce a lot of useless but useful information. It’s not useful at the moment. This is like our work enviroment. I use to be a big advacate of duel, and not more screens. I would often have 5 things going on at once. My desk use to have everything I needed to quickly grab and solve a personal problem. For example, my chapstick sat on my computer stand. My water bottle beside the monitor. Papers, sticky notes, and more all scattered accross my desk. I wondered why I couldn’t focus. Our brains are like batteries. How much focus is the charge. Our brains take in everything. Your brain notices the speck of dirt on the computer monitor and the sticky note, without your password on it, hanging from your monitor. This takes your charge.

Having two monitors is great and I still use two. However, I have a focused monitor and a second monitor for when I need to connect to something else. At some point I will get a larger wider monitor and drop the second one all together. Having less allows your brain to grab more attention on one or two tasks. Someone like myself, I have more than one task going at any moment. That’s ok with my brain. Let’s use our Select-object command in real life and remove the distractions from our desks.

Additional Readings

by David | Feb 26, 2024 | Blog, Help Desk, Information Technology, Lessons

Reading Time: 2 minutes

In the intricate ecosystem of IT support, the quality of communication in ticket submissions can significantly influence the efficiency of problem resolution. Imagine walking into a dense forest, each tree representing a different issue or ticket awaiting resolution. Just as a seasoned guide can navigate these woods with ease, providing clear paths and descriptions, a Subject Matter Expert (SME) in IT can illuminate the way to swift solutions with well-crafted tickets.

The Spectrum of Ticket Details

Venture into the thicket of daily IT support tickets, and you’ll encounter a wide array of communication styles. On one end, there are tickets like faint, barely noticeable trails – vague, minimal details offered by users unsure of what information is pertinent. Bob from manufacturing, for example, might simply state, “My computer won’t turn on,” leaving the path to resolution obscured by underbrush.

Contrastingly, tickets from more technically adept users, like Jan from accounting, are akin to well-trodden paths through the forest, marked by signs and clear directions. Jan not only mentions reinserting cables and attempting to power on her computer but also notes the absence of the usual boot-up text, laying breadcrumbs for IT support to follow towards a solution.

Cafting a Map to Resolution

Subject Matter Experts (SMEs) stand as the rangers of this forest, armed with the knowledge and tools to guide others through even the densest undergrowth. Here’s how they can effectively chart the course:

- Know Your Audience: Just as a ranger alters their guidance based on the experience of the hikers, SMEs should tailor their ticket submissions to the technical level of the IT support team. This ensures that the instructions are neither too complex for general support staff nor too simplistic for specialists.

- Use a Structured Format: A structured ticket is like a map, offering a clear overview of the terrain at a glance. By organizing the issue, steps taken, and potential solutions logically, SMEs create a guide that others can follow easily, avoiding unnecessary detours.

- Prioritize Clarity and Brevity: In the dense forest of IT issues, clarity acts as a beacon, guiding the support team directly to the heart of the problem. SMEs should aim to illuminate the path with precise, concise language, ensuring no one gets lost in unnecessary details.

- Offer Potential Solutions: Suggesting solutions or workarounds is akin to marking potential paths on a map. While not all may lead directly to the destination, they provide starting points, accelerating the journey towards resolution.

- Include Visuals When Necessary: Sometimes, the most effective way to describe a landscape is through visuals. Diagrams, screenshots, and videos can serve as snapshots of the issue, offering immediate context and understanding.

- Encourage Open Communication: Ending a ticket with an invitation for questions is like leaving a trail of markers for others to follow, ensuring that if the path becomes unclear, further guidance is just a call away.

Navigating the Forest Together

In the realm of IT, “Subject Matter Expert Tickets” are more than just requests for assistance; they’re opportunities for SMEs to lead by example, demonstrating how detailed, well-structured communication can streamline the resolution process. It’s about creating a collaborative environment where every ticket, like a trail in the forest, is clearly marked and navigable, leading to a more efficient, effective IT support system.

By adopting these strategies, SMEs not only enhance their own credibility but also contribute to a culture of clarity and cooperation, ensuring that the vast forest of IT support is a little easier for everyone to navigate.

by David | Feb 19, 2024 | Deployments, Information Technology, Resources

Reading Time: 6 minutes

A few weeks ago, we built WordPress in Docker. Today I want to go deeper into the world of docker. We will be working with a single WordPress instance, but we will be able to expand this setup beyond what is currently there over time. Unlike last time we will be self-containerizing everything and adding plugins along with the LDAP php which doesn’t natively come with the WordPress:Latest image. It’s time to build an WordPress in Docker with LDAP.

Docker Files

As we all know docker uses compose.yml files for it’s base configuration. This file processes the requested image based on the instructions in the compose. Last time we saw that we could mount the wp-content to our local file system to edit accordingly. The compose handles that. This time we are going about it a little differently. The compose file handles the configuration of basic items like mounting, volumes, networks, and more. However, it can’t really do much in the line of editing a docker image or adding to it. The compose file has the ability to call upon a build command.

services:

sitename_wp:

build:

context: .

dockerfile: dockerfile

The build is always within the service that you want to work with. the Context here is the path of the build. This is useful if you have the build files somewhere else like a share. Then the dockerfile will be the name of the build. I kept it simple and went with docker file. This means there are now two files. the docker-compose.yml and this dockerfile.

What are the Dockerfile

The docker file takes an image and builds it out. It has some limitations. The dockerfile can add additional layers that adds to the over all size of the image. Non-persistence is the next problem, by it’s ephemeral nature, it disappears after it’s first use. The file can only do a single threaded execution. Thus, it can’t handle multiple things at once. It’s very liner in it’s nature. If than, and other structures are not present in the docker file. This makes it hard for it to be a programing language. There are limits to versioning.

The docker file cannot work with networking or ports. There is no user management inside the dockerfile process. Complexity is a big problem with these files as the more complex, the harder it is to maintain. Never handle passwords inside the dockerfile. The docker file can’t handle environmental variables. The thing that hit me the hardest, limited apt-get/yum commands. Build context is important as dockerfiles can slow down performance. Finally, dockerfile’s may not work on all hosts.

With those items out of the way, docker files can do a lot of other good things like layering additional items to a docker image. The container treats these files as root and runs them during the build. This means you can install programs, move things around and more. It’s time to look at our dockerfile for our WordPress in Docker with LDAP.

The Dockerfile

# Use the official WordPress image as a parent image

FROM wordpress:latest

# Update package list and install dependencies

RUN apt-get update && \

apt-get install -y \

git \

nano \

wget \

libldap2-dev

# Configure and install PHP extensions

RUN docker-php-ext-configure ldap --with-libdir=lib/x86_64-linux-gnu/ && \

docker-php-ext-install ldap

# Clean up

RUN rm -rf /var/lib/apt/lists/*

# Clone the authLdap plugin from GitHub

RUN git clone https://github.com/heiglandreas/authLdap.git /var/www/html/wp-content/plugins/authLdap

# Add custom PHP configuration

RUN echo 'file_uploads = On\n\

memory_limit = 8000M\n\

upload_max_filesize = 8000M\n\

post_max_size = 9000M\n\

max_execution_time = 600' > /usr/local/etc/php/conf.d/uploads.ini

The Breakdown

Right off the bat, our FROM calls down the wordpress:latest image. This is the image we will be using. This is our base layer. Then we want to RUN our first command. Run commands like to have the same commands. Remember, every command is ran as the container’s root. The first RUN command will contain two commands. The APT-Get Update and the install. We are installing git, this way we can grab a plugin, nano, so we can edit files, wget, for future use and our php ldap.

apt-get update &&\

apt-get install -y git nano wget libldap2-dev

Please notice the && \. The \ means to treat the next line as part of this command. The && means and. The && allows you to run mulitple commands on the same line. Since each RUN is a single line, this is very important. The libldap2-dev is our ldap plugin for php. Our next RUN edits the docker php extension.

The Run Commands

RUN docker-php-ext-configure ldap --with-libdir=lib/x86_64-linux-gnu/ && \

docker-php-ext-install ldap

docker-php-ext- * is a built in scripts to our WordPress image. We tell the configure where our new libraries are located for the PHP. Then we tell php to install the ldap plugin. After we have it installed, we need to do some clean up with the next RUN command.

rm -rf /var/lib/apt/lists/*

At this point, we have WordPress in Docker with LDAP php modules. Now I want a cheap easy to use plugin for the ldap. I like the authldap plugin. We will use the git command that we installed above and clone the repo for this plugin. Then drop that lpugin into the WordPress plugin folder. This is our next RUN command.

git clone https://github.com/heiglandreas/authLdap.git /var/www/html/wp-content/plugins/authLdap

In our previous blog, we used a printf command to make a upload.ini file. Well, we don’t need that. You can do this here. We trigger our final RUN command. This time it will be echo. Echo just says stuff. So we echo all the PHP settings into our uploads.ini within the image.

# Add custom PHP configuration

RUN echo 'file_uploads = On\n\

memory_limit = 8000M\n\

upload_max_filesize = 8000M\n\

post_max_size = 9000M\n\

max_execution_time = 600' > /usr/local/etc/php/conf.d/uploads.ini

Docker Compose

Now we have our Dockerfile built out. It’s time to build out our new docker compose file. Here is the compose file for you to read.

version: '3.8'

services:

sitename_wp:

build:

context: .

dockerfile: dockerfile

ports:

- "8881:80"

- "8882:443"

environment:

WORDPRESS_DB_HOST: sitename_db:3306

WORDPRESS_DB_USER: ${WORDPRESS_DB_USER}

WORDPRESS_DB_PASSWORD: ${WORDPRESS_DB_PASSWORD}

WORDPRESS_DB_NAME: ${MYSQL_DATABASE}

WORDPRESS_AUTH_KEY: ${WORDPRESS_AUTH_KEY}

WORDPRESS_SECURE_AUTH_KEY: ${WORDPRESS_SECURE_AUTH_KEY}

WORDPRESS_LOGGED_IN_KEY: ${WORDPRESS_LOGGED_IN_KEY}

WORDPRESS_NONCE_KEY: ${WORDPRESS_NONCE_KEY}

WORDPRESS_AUTH_SALT: ${WORDPRESS_AUTH_SALT}

WORDPRESS_SECURE_AUTH_SALT: ${WORDPRESS_SECURE_AUTH_SALT}

WORDPRESS_LOGGED_IN_SALT: ${WORDPRESS_LOGGED_IN_SALT}

WORDPRESS_NONCE_SALT: ${WORDPRESS_NONCE_SALT}

volumes:

- sitename_wp_data:/var/www/html

depends_on:

- sitename_db

networks:

- sitename_net_wp

sitename_db:

image: mysql:5.7

volumes:

- sitename_wp_db:/var/lib/mysql

environment:

MYSQL_ROOT_PASSWORD: ${MYSQL_ROOT_PASSWORD}

MYSQL_DATABASE: ${MYSQL_DATABASE}

MYSQL_USER: ${MYSQL_USER}

MYSQL_PASSWORD: ${MYSQL_PASSWORD}

networks:

- sitename_net_wp

networks:

sitename_net_wp:

driver: bridge

volumes:

sitename_wp_data:

sitename_wp_db:

The WordPress in Docker with LDAP breakdown

First thing first, Notice everywhere you see the name “sitename”. To use this docker correctly, one must replace that information. This will allow you to build multiple sites within their own containers, networks and more. As stated before, the first thing we come accross is the build area. This is where we tell teh system where our dockerfile lives. Context is the path to the file in question and dockerfile is the file above.

Next, is the ports. We are working with port 8881:80. This is where you choose the ports that you want. The first number is the port your system will reach out to, the second number is the port that your container will understand. Our SSL port is 8882 which is the standard 443 on the containers side.

ports:

- "8881:80"

- "8882:443"

Next are the enviromental veriables. If you notice, some of the items have ${codename} instead of data. These are veriables that will pull the data externally. This approach prevents embedding the codes inside the compose file. The volume is the next part of this code. Instead of giving a physical location, we are giving it a volume. Which we will declare later. Next, we state the wordpress page is dependant on the mysql image. Finally, we select a network to tie this container to. The process is the same for the database side.

Finally, we declare our network with the networks. This network will have it’s own unique name, as you see the sitename is within the network name. We set this network to bridge, allowing access from the outside world. Finally we declare our volumes as well.

The hidden enviromental file

The next file is the enviromental file. For every ${codename} inside the docker, we need an envorimental veriable to match it. Some special notes about the salts for WordPress. The unique symbols, such as a $ or an =, in the code injection cause the docker to break down. It is wise to use numbers and letters only. Here is an example:

WORDPRESS_DB_USER=sitename_us_wp_user

WORDPRESS_DB_PASSWORD=iamalooserdog

MYSQL_ROOT_PASSWORD=passwordsareforloosers

MYSQL_DATABASE=sitename_us_wp_db

MYSQL_USER=sitename_us_wp_user

MYSQL_PASSWORD=iamalooserdog

WORDPRESS_AUTH_KEY=4OHEG7ZKzXd9ysh5lr1gR66UPqNEmCtI5jjYouudEBrUCMtZiS1WVJtyxswfnlMG

WORDPRESS_SECURE_AUTH_KEY=m0QxQAvoTjk6jzVfOa8DexRyjAxRWoyq08h1fduVSHW0z2o4NU2q7SjKoUvC3cJz

WORDPRESS_LOGGED_IN_KEY=9LxfBFJ5HyAtbrzb0eAxFG3d9DNkSzODHmPaY6kKIsSQDiVvbkw0tC71J98mDdWe

WORDPRESS_NONCE_KEY=kKMXJdUTY0b6xZy0bLW9YALpuNHcZfow6lDZbRqqlaNPmsLQq45RhKdCNPt34fai

WORDPRESS_AUTH_SALT=IFt5xLir4ozifs9v8rsKTxZBFCNzVWHrpPZe8uG0CtZWTqEBhh9XLqya4lBIi9dQ

WORDPRESS_SECURE_AUTH_SALT=DjkPBxGCJ14XQP7KB3gCCvCjo8Uz0dq8pUjPB7EBFDR286XKOkdolPFihiaIWqlG

WORDPRESS_LOGGED_IN_SALT=aNYWF5nlIVWnOP1Zr1fNrYdlo2qFjQxZey0CW43T7AUNmauAweky3jyNoDYIhBgZ

WORDPRESS_NONCE_SALT=I513no4bd5DtHmBYydhwvFtHXDvtpWRmeFfBmtaWDVPI3CVHLZs1Q8P3WtsnYYx0

As always, grab your salts from an offical source if you can make it work, Here is the WordPress Official source site. You can also use powershell to give you a single password, take a look here. Of course, replace everything in this file with your own passwords you wish. If you have the scripting knowledge, you can auto-generate much of this.

Bring Docker to Life

Now we have all of our files created. It’s finally time to bring our creation to life. Run the following command:

If you notice, there are additional information that appears. The dockerfile will run and you can watch it as it runs. if there are errors, you will see them here. Often times, the erros will be syntex issues. Docker is really good at showing you what is wrong. So, read the errors and try finding the answer online.

What can we learn as a person today?

Men are born soft and supple; dead, they are stiff and hard. Plants are born tender and pliant; dead, they are brittle and dry. Thus whoever is stiff and inflexible is a disciple of death. Whoever is soft and yielding is a disciple of life. The hard and stiff will be broken. The soft and supple will prevailLao Tzu

In seeking assistance from forums like the sysadmin subreddit or Discord channels, I often encounter rigid advice, with people insisting on a singular approach. This rigidity echoes Lao Tzu’s words: “Men are born soft and supple; dead, they are stiff and hard… The hard and stiff will be broken. The soft and supple will prevail.” In professional settings, flexibility and adaptability are crucial. Entering a new company with an open mindset, ready to consider various methods, enables us to navigate around potential obstacles effectively. Conversely, inflexibility in our career, adhering strictly to one method, risks stagnation and failure. Embracing adaptability is not just about avoiding pitfalls; it’s about thriving amidst change. Lao Tzu’s wisdom reminds us that being pliant and receptive in our careers, much like the living beings he describes, leads to resilience and success.

by David | Feb 12, 2024 | Information Technology, PowerShell

Reading Time: 4 minutes

In the intricate web of modern network management, the security and integrity of user accounts stand paramount. “AD User Audit with PowerShell” isn’t just a technical process; it’s a critical practice for any robust IT infrastructure. Why, you ask? The answer lies in the layers of data and accessibility that each user account holds within your organization’s Active Directory (AD). Whether it’s for compliance, security, or efficient management, auditing user accounts is akin to a health check for your network’s security posture.

Understanding the last login times, password policies, and group memberships doesn’t only highlight potential vulnerabilities; it also paves the way for proactive management and policy enforcement. PowerShell, with its powerful scripting capabilities, emerges as an indispensable tool in this endeavor. It transforms what could be a tedious manual audit into an efficient, automated process.

Why Perform an AD User Audit?

An “AD User Audit with PowerShell” serves multiple purposes:

- Security: Identifying inactive accounts or those with outdated permissions reduces the risk of unauthorized access.

- Compliance: Regular audits ensure adherence to internal and external regulatory standards.

- Operational Efficiency: Understanding user activities and configurations aids in optimizing network management.

The Script:

Import-Module ActiveDirectory

Function Get-PasswordExpiryDate {

param (

[DateTime]$PasswordLastSet,

[System.TimeSpan]$MaxPasswordAge

)

return $PasswordLastSet.AddDays($MaxPasswordAge.TotalDays)

}

$MaxPasswordAge = (Get-ADDefaultDomainPasswordPolicy).MaxPasswordAge

Get-ADUser -Filter * -Properties Name, samaccountname, Enabled, LastLogonDate, PasswordLastSet, MemberOf, LockedOut, whenCreated |

Select-Object Name, samaccountname, Enabled, LastLogonDate, PasswordLastSet, @{Name="PasswordExpiryDate"; Expression={Get-PasswordExpiryDate -PasswordLastSet $_.PasswordLastSet -MaxPasswordAge $MaxPasswordAge}}, @{Name="GroupMembership";Expression={($_.MemberOf | Get-ADGroup | Select-Object -ExpandProperty Name) -join ", "}}, LockedOut, whenCreated |

Export-Csv -Path "c:\temp\AD_User_Audit_Report.csv" -NoTypeInformation

The Breakdown

The first thing we do in this powershell script is the import module. We are importing the ActiveDirectory. Then we are going to setup a nice little function to help grab the expiry date of the account. The function here will depend on the dates and time as the parameters. The function resolves the time span needed. Next we will grab the data we need. That’s the Name, Samaccountname, enabled, last logon date, password last set, member of, locked out, and finally when created. From there we will use select object. This is where some of the good stuff happens. When we select the pasword expire date, we are going to use the @ name and expression. Lets break that down some.

Special Select

@{Name="PasswordExpiryDate"; Expression={Get-PasswordExpiryDate -PasswordLastSet $_.PasswordLastSet -MaxPasswordAge $MaxPasswordAge}},

Inside the select object command, you can select different properties and give custom properties. Having a name or label is the first part. The Expression can be it’s own command line. Here we have the function from earlier. We are pushing the get-aduser’s password last set and running it through the get-passwordexpirydate. Make sure to wrap the expression inside curly brackets. We can do this same thing with the group membership.

The Group Membership pulls apart the memberof and passes it through the get-adgroup. From there we grab the name of the group. Now since we want to export this into a csv, it would be best to use a join to make it easy. Once we have that information setup. We export it to a csv. Then we are done. This information can then be passed on to the security admin to work with.

Script Deployment and Usage

The execution of this script in your environment is straightforward but requires administrative privileges. It’s designed to run seamlessly, outputting a comprehensive report that encapsulates the health and status of each user account in your Active Directory. This report not only informs your immediate security strategies but also assists in long-term IT planning and policy development.

What can we learn as a person today?

Just as “AD User Audit with PowerShell” ensures the health of an IT infrastructure, journaling serves as a personal audit. It’s a practice that offers self-reflection, tracking progress, and identifying areas for improvement in our personal lives.

Self-Reflection

Journaling is a gateway to the soul, a mirror reflecting our deepest thoughts and feelings. In the quiet moments with our journal, we engage in an intimate conversation with ourselves. This practice allows us to confront our fears, celebrate our successes, and ponder our aspirations. It’s a safe space where we can express emotions without judgment, helping us understand and accept who we are.

Imagine a day filled with stress and uncertainty, where words left unsaid create a storm inside you. In your journal, these unspoken thoughts find a voice. You write about the meeting that didn’t go as planned, the conversation with a friend that left you unsettled. As the words flow, there’s a sense of unburdening, a weight lifting off your shoulders. It’s in this self-reflection that clarity emerges, often bringing peace and a newfound understanding of your emotional landscape.

Tracking Progress

Like a lighthouse guiding ships through a stormy sea, journaling illuminates our path through life’s complexities. It helps us track where we’ve been and where we’re heading, offering insights into our personal evolution. By regularly documenting our experiences, thoughts, and feelings, we create a tangible record of our journey. This practice can reveal patterns in our behavior and responses, helping us recognize both our strengths and areas for growth.

Consider a journal entry from a year ago, describing feelings of apprehension about starting a new venture. Fast forward to today, and your journal tells a different story—one of growth, learning, and newfound confidence. The contrast between then and now is stark, yet it’s a powerful testament to your resilience and adaptability. Reading your past entries, you feel a surge of pride in how far you’ve come, reinforcing your belief in your ability to face future challenges.

Identifying Areas for Improvement

Journaling not only captures our current state but also acts as a compass, pointing out the areas in our life that need attention. It can highlight recurring issues, whether in relationships, work, or personal habits, prompting us to seek solutions or make necessary changes. This process of self-audit through journaling encourages us to be honest with ourselves, to confront uncomfortable truths, and to take actionable steps towards betterment.

Imagine writing about the same problem repeatedly—a strained relationship with a colleague or a persistent feeling of dissatisfaction. Seeing these recurring themes in your journal can be an eye-opener, signaling a need for change. It might inspire you to initiate a difficult conversation, seek external advice, or explore new opportunities. The journal becomes a catalyst for transformation, nudging you towards decisions and actions that align with your true desires and values.

Final Thoughts

In each stroke of the pen, journaling offers a chance for introspection, growth, and healing. It’s our personal audit, tracking our emotional health and guiding us towards a more mindful and fulfilling life. Just as “AD User Audit with PowerShell” ensures the health of our digital environments, journaling safeguards the wellbeing of our inner world.

Additional Resources

by David | Feb 5, 2024 | Information Technology, PowerShell

Reading Time: 5 minutes

Today we are going to go over how to create hundreds of users at once using PowerShell in active Directory. This is great for building a home lab to test things out with. To learn how to build your own AD lab, you can look over this video. Towards the end of this video he shows you how to do the same thing, but, today, I am going to show you a simple way to get unique information. This way you can use PowerShell to Create Bulk Users in your Active Directory.

The Script

$DomainOU = "DC=therandomadmin,dc=com"

$Domain = "therandomadmin.com"

$Users = import-csv C:\temp\ITCompany.csv

$OUs = $users | Group-Object -Property StateFull | Select-Object -ExpandProperty Name

New-ADOrganizationalUnit -Name "Employees" -Path "$DomainOU"

$EmployeePath = "OU=Employees,$($DomainOU)"

foreach ($OU in $OUs) {

New-ADOrganizationalUnit -Name $OU -Path $EmployeePath

}

foreach ($user in $Users) {

$Param = @{

#Name

GivenName = $User.GivenName

Surname = $User.Surname

DisplayName = "$($User.GivenName) $($User.MiddleInitial) $($User.Surname)"

Name = "$($User.GivenName) $($User.MiddleInitial) $($User.Surname)"

Description = "$($user.City) - $($User.Color) - $($user.Occupation)"

#Email and Usernames

EmailAddress = "$($User.GivenName).$($User.MiddleInitial).$($User.Surname)@$($Domain)"

UserPrincipalName = "$($User.GivenName).$($User.MiddleInitial).$($User.Surname)@$($Domain)"

SamAccountName = "$($User.GivenName).$($User.MiddleInitial).$($User.Surname)"

#Contact Info

StreetAddress = $user.StreetAddress

City = $user.City

State = $user.State

Country = $user.Country

HomePhone = $user.TelephoneNumber

#Company Info

Company = "DPB"

Department = $user.Color

Title = $user.Occupation

EmployeeID = $user.Number

EmployeeNumber = $user.NationalID.replace("-",'')

Division = $user.State

#Account Data

Enabled = $true

ChangePasswordAtLogon = $false

AccountPassword = ConvertTo-SecureString -String "$($user.Password)@$($user.NationalID)" -AsPlainText -Force

Path = "OU=$($User.StateFull),$EmployeePath"

#Command

ErrorAction = "SilentlyContinue"

Verbose = $true

}

try {

New-ADUser @Param

} catch {

Write-Error "$($User.GivenName) $($User.MiddleInitial) $($User.Surname)"

}

}

Bulk User File?

This script is very dependant on a csv file that magically seems to appear. Well, it doesn’t. The first thing we need is to get a CSV of bulk users to create bulk users. To do this, you can navigate to a site called fake name generator. This site allows you to quickly generate user information to use to build your site.

- Navigate to the https://www.fakenamegenerator.com/.

- Click Order in Bulk

- Check the I agree check box

- Select Common Sepearted (CSV) and the compression is zip.

- Then select your country. I selected American.

- Note: Some languages will cause issues with AD due to unique characters. If you do select this, make sure to correct for it.

- Select your country of choice. I choose the US.

- Select the age and gender ranges. You can keep this standard

- Then I selected All on the included fields.

- Select how many you want and enter email

- Note: A single OU doesn’t display more than 2000 users. This script creates sub OUs just for this case based on the zodaic signs.

- Then verify and place your order.

Once you have the file, we can get started explaining what we are going to do to Create Bulk Users in Active Directory with the Power of PowerShell.

The Breakdown

It’s time to break down this script. The first two lines are the domain information. I’m using therandomadmin.com as a example. The next is the Bulk Users csv. These are the lines you want to change if you want to use this in your own lab. The next line grabs the OUs names. We want the full state names in this case from the csv. Next we will create the Employees OU that will host all of the other OUs.

New-ADOrganizationalUnit -Name "Employees" -Path "$DomainOU"

Now we have the OU built, we will make a path for later. by dropping the Employees and the domain ou into it’s own variable. using this variable, we enter a foreach loop using the OUs. We want to build a new OU for each OU in the OUs.

foreach ($OU in $OUs) {

New-ADOrganizationalUnit -Name $OU -Path $EmployeePath

}

Next, we will go through the loop of users. In each loop, we want to build a splat. Splatting was covered here in a previous blog. In this splat, we are looking over the New-ADUser commandlet. Lets break it apart.

The Splat

GivenName = $User.GivenName

Surname = $User.Surname

DisplayName = "$($User.GivenName) $($User.MiddleInitial) $($User.Surname)"

Name = "$($User.GivenName) $($User.MiddleInitial) $($User.Surname)"

Description = "$($user.City) - $($User.Color) - $($user. Occupation)"

Using the csv file. We are using the Given name, Surname, and Middle Initial. Using this information, we make the display name, given name, sur name and the name. Then we use the city, color and occupation. The next part is we want to build the usernames.

EmailAddress = "$($User.GivenName).$($User.MiddleInitial).$($User.Surname)@$($Domain)"

UserPrincipalName = "$($User.GivenName).$($User.MiddleInitial).$($User.Surname)@$($Domain)"

SamAccountName = "$($User.GivenName).$($User.MiddleInitial).$($User.Surname)"

Using the same structure as the name, We just add dots and for the email, we just add the domain. Then we will grab the street address, city, state, country, and home phone.

StreetAddress = $user.StreetAddress

City = $user. City

State = $user. State

Country = $user. Country

HomePhone = $user.TelephoneNumber

Next we want to use do the company information. We want the department as the color, the Title will be the occupation, employee id will be the users number, the employee number would be the social and finally the division would be the state.

Company = "The Random Admin"

Department = $user. Color

Title = $user. Occupation

EmployeeID = $user. Number

EmployeeNumber = $user.NationalID.replace("-",'')

Division = $user. State

Now we have company information, we want to make account information. Things like being enabled, password changing, the password and finally the OU. We want to do the Full state name for the OU. This way it matches with the OUs we built before hand.

Enabled = $true

ChangePasswordAtLogon = $false

AccountPassword = ConvertTo-SecureString -String "$($user.Password)@$($user.NationalID)" -AsPlainText -Force

Path = "OU=$($User.StateFull),$EmployeePath"

Finally, we want to push though the command itself. These are the cmdletbinding() flag commands like verbose and error action.

ErrorAction = "SilentlyContinue"

Verbose = $true

Now the splat is done. It’s time to build the try catch with a useful error. By default the Error message is massive. So, making it easier with just the Name is very much more helpful. We will make sure to splat in the new-aduser information.

try {

New-ADUser @Param

} catch {

Write-Error "$($User.GivenName) $($User.MiddleInitial) $($User.Surname)"

}

That’s all for this script. It’s not hard, but it should allow you to create a lab quickly. You can download the CSV here if you wish.

What can we learn as a person today?

Unlike the God’s of old, we are not able to create new people in our lives to meet our needs. Instead, we have to find people. Like we pointed out last week, networking is massive. How we are to other with our networking is extremely important. Without networking, we tend to find ourselves in a hole. Imagine a giant hole in the ground with oiled up smooth metal walls and all you have to get out is a rope that is at the top of the hole. There is a lot that can happen here. The rope can stay there. Someone can throw you the rope.

Throwing the rope

Someone can throw you the rope and walk away. The rope will land in the hole with you. You can try to throw the rope out, but without something to cling to, the rope will just fall back down to you. This is like the man who says to just study for this exam or that exam. He threw you a rope, but hasn’t really done anything else.

Now image if someone secured that rope to something like a car or a rock and threw the other end to you. Now you have something to climb up with. This is the person who has given you resources to look into. For example, I hear you want to get into networking but have no experience. I’m going to say study the network plus exam and then tell you about professor messor on youtube. This is super helpful and most people can climb out of the hole with the rope. However, in this senerio, the wall’s are oiled up. Thus, footing is an issue.

Finally, we have the guy who ties the rope to his car, and throws you the other end. Then backs up with his car pulling you out of the hole. This would be a manager, or a senior member of an IT company taking a new person under their wing and leverging the company to help them learn new things. This is the kind of company, I would want to work with.

Final Thoughts

When you are working with people helping them with their career, some people just need the rope. Some people need the anchor and finally some needs to be pulled out of the hole. A lot of this is due to society and situations. Being aware of these facts can help you network with others better and grow your support team. Being aware of yourself allows you to know who you need as well. Do you need the truck? Do you need an anchor? What is it that we need to get you out of the holes that we find ourselves in? What can we be to others?

by David | Feb 2, 2024 | Help Desk, Information Technology, Resources

Reading Time: 4 minutes

While install the LB5034439 update, i received an error message of 0x80070643. Google failed me over and over. Every post I saw talked about using dism commands to repair the update. Which none of these resolved the issue. Finally microsoft dropped a useful article about the update. Inside the update, it stated that the update will fail if your recovery drive had less than 250mb of free space. Well, my recovery drive had only 500 mb of space and only 83 mb of free space. I will go over how to find that information. So, Resolving KB5034439 error was as simple as expanding the recovery drive.

Finding the Recovery Partition Size

So, to find the recovery partition size, I used a simple powershell script. The idea behind the script was to grab the drives, the partitions and do some simple math. Of course, this came from superuser. All I did was tweak it a little to reflect my needs.

$disksObject = @()

Get-WmiObject Win32_Volume -Filter "DriveType='3'" | ForEach-Object {

$VolObj = $_

$ParObj = Get-Partition | Where-Object { $_.AccessPaths -contains $VolObj.DeviceID }

if ( $ParObj ) {

$disksobject += [pscustomobject][ordered]@{

DiskID = $([string]$($ParObj.DiskNumber) + "-" + [string]$($ParObj.PartitionNumber)) -as [string]

Mountpoint = $VolObj.Name

Letter = $VolObj.DriveLetter

Label = $VolObj.Label

FileSystem = $VolObj.FileSystem

'Capacity(mB)' = ([Math]::Round(($VolObj.Capacity / 1MB),2))

'FreeSpace(mB)' = ([Math]::Round(($VolObj.FreeSpace / 1MB),2))

'Free(%)' = ([Math]::Round(((($VolObj.FreeSpace / 1MB)/($VolObj.Capacity / 1MB)) * 100),0))

}

}

}

$disksObject | Sort-Object DiskID | Format-Table -AutoSize

What this script does is, it grabs the volumes on the machine that is not detachable, like a usb. Then we loop through each volume and grab the partitions that has an id associated with the volume. From there we just pull the data out and do basic math. Finally we display the information. The important part of this script is the recovery label. If your free space is less than 250mbs, we are going to have some work to do.

Clean up the Recovery Partition

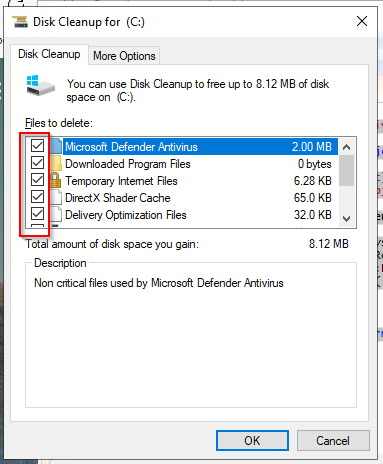

The first thing I tried to do is use the cleanmgr to clean up the recovery partition. Running it as an administrator will give you this option. Inside the disk cleanup software, select everything you can. Then in the “More Options” tab, you should be able to clean up the “System Restore and Shadow Copies”. After doing these items, run the script again and see if you have enough space. In my case I did not. Cleaning the Recovery partition did not resolve the KB5034439 error.

Growing Recovery

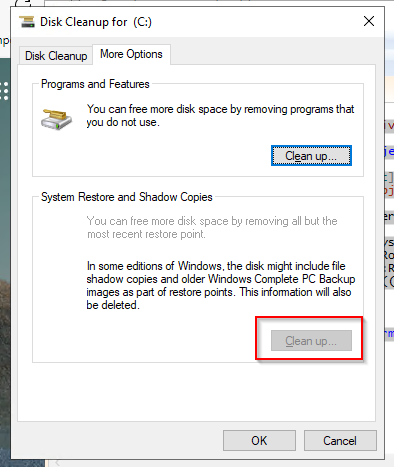

So, the first thing I had to do is go into my partition manager in my server. The recovery partition in my case was at the end of the drive. The partition next to the recovery was thankfully my main partition. I shrank my main partition by a gb. That was the easy part. Now the hard part. I had to rebuild my recovery partition inside that shrinked space. These are the steps on how to do that.

- Start CMD as administrator.

- Run reagentc /disable to disable the recovery system.

- run DiskPart

- Run List Disk to find your disk.

- Run Select Disk # to enter the disk you wish to edit.

- Run List Partition to see your partitions. We want the Recovery partition.

- Run select partition #. In my case, this is partition 4. The recovery partition.

- Run delete partition override. This will delete the partition. If you don’t have the right one selected, get your backups out.

- Run list partition to confirm the partition is gone.

- Now inside your partition manager, Click Action > Refresh

- Select the Free space and select New Simple Voume

- Inside the Assign Drive Letter or Path Window, Radio check “Do not assign a drive letter or drive path” and click Next

- Inside the Format Partition Change the volume Label to Recovery and click Next

- This will create the new partition. Navigate back to your command Prompt with Diskpart

- Run list partition

- Run select partition # to select your new partition.

The next command depends on the type of disk you are working with the first list disk shows a star under gpt if the disk was a gpt disk.

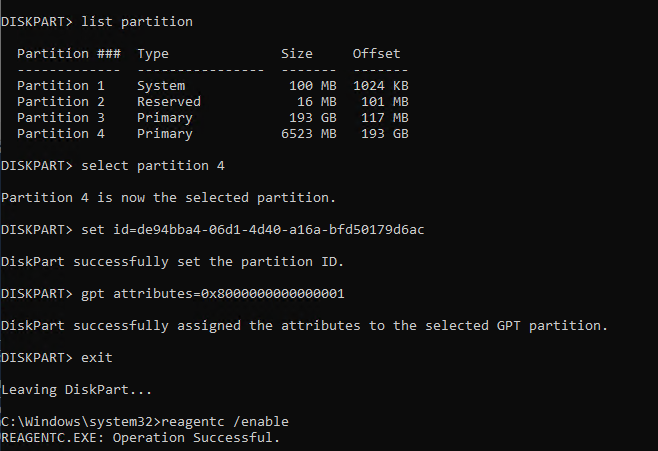

GPT Disk

- Run set id=de94bba4-06d1-4d40-a16a-bfd50179d6ac

- Run gpt attributes=0x8000000000000001

- Run Exit to exit diskpart.

- Run reagentc /enable to renable the recovery disk partition.

- This command moves the .wim file that it created with the disable from the c:\windows\system32\recovery back into the recovery partition.

Finally, run your windows updates. Resolving KB5034439 error is a little scary if you have a server that is more complex. Thankfully it wans’t that complex on my end. You will have to adapt your approach to match what is needed.